- Blog

- Abbyy finereader 10 home edition keygen

- Easycare comfort pad system

- Buds guns

- Battle raper 2 sex

- Read al quran bangla

- Hack amazon gift card generator

- Reliability and validity of mmpi-2

- The lost vikings 2 players

- Zemax file to codev

- Subramanyapuram telugu hd movie online free

- Ford as built data by vin

- Tmpgenc authoring works 4 crack free download

- Songs from fifty shades of grey movie

- Paul blart mall cop movie review

- #Zemax file to codev full#

- #Zemax file to codev verification#

- #Zemax file to codev software#

- #Zemax file to codev series#

Is here defined as matching the asphere at the vertex and edge. Quadrant shows the asphere departure in test wavelengths (usually 632.82 nm) fromĪ "best fit" sphere, versus radial coordinate in lens units. This four quadrant diagram summarizes the null test geometry. Aspheric departure and beam footprint diagram

To the CGH face and with respect to the test optic. This listing concludes with a tabulation of vertex coordinates with respect Surfaces labeled CGH Carrier and CGH Phase. The raytrace model begins andĮnds with a focused or collimated wavefront. Lens file is included as an electronic appendix. This is a partial tabulation of the OSLO double pass raytrace model the complete WithĮxperience, the information on the label is sufficient for positioning the CGH The box label is provided as an aid in identifying the correct CGH null. A "vertex" aperture focuses light onto the test optic vertex to serve It is used to align the test optic tilt and tip independent of the centrationĪnd focus. An "autocollimation" aperture producesĪ collimated wavefront, usually perpendicular to some planar feature on the test

#Zemax file to codev series#

HA50 Series Alignment CGHs consist entirely of retroĪpertures with the relevant ones shaded. Test wavefront back on itself to create a null interferogram and serves as anĪid in aligning the CGH. The null aperture is the actual CGH null. These diagrams show the apertures of the CGH null and the Alignment CGH (ifĪny). This information is repeated in an enlarged view at the upper left of the drawing. If the scale is such that these are difficult to see, then This oblique view shows the null test configuration with the CGH on the leftĪnd the test beam propagating to the right. To use the CGH null to perform an aspheric null test.

These customized instructions should enable anyone experienced in interferometry Order and associated documentation as well as Diffraction International's own This certifies that the CGH null meets all specifications of your purchase

#Zemax file to codev verification#

EdgeChk verification of encoding and digitization.Zero-order interferograms after phase etch.Zero-order interferograms before phase etch.Substrate interferograms before patterning.Aspheric departure and beam footprint diagram.CGH encoding and digitizationĪre performed using Diffraction International's proprietary HoloMask software.īoth OSLO and HoloMask can generate AutoCAD compatible graphic data. OSLO commands and proprietary OSLO CCL programs. Much of the design documentation is generated by standard To automate much of the design and documentation process and has few hard limits

#Zemax file to codev software#

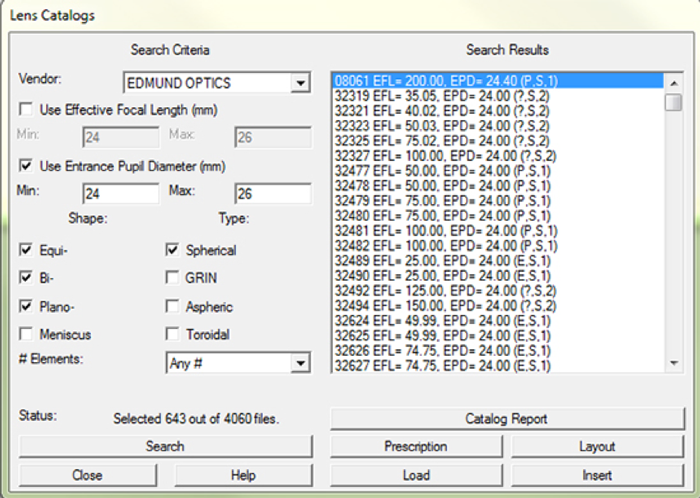

This software was selected because it can be programmed About the ZOS-API.User Analysis.User Guide to CGH Encoding and Fabrication Report CGH Report Guideĭiffraction International designs and analyzes aspheric null tests using OSLO The User Analysis of this article is written in C#.įor more information about the User-Analysis, check the built-in Help file under The Programming Tab. User analyses can be written using either C++ (COM) or C# (.NET). in this mode only changes to a copy of the system are allowed). This mode does not allow changes to the current lens system or the user interface (i.e. The displayed data is using the modern graphics provided in OpticStudio for most analyses. The User Analysis Mode is used to populate data for custom analysis.

#Zemax file to codev full#

There are 4 Program modes to connect the application program and OpticStudio, but they can be gathered into 2 main categories:ġ) Full Control (Standalone and User Extensions modes), in which the user generally has full control over the lens design and user interface.Ģ) Limited Access (User Operands and User Analysis modes), in which the user is locked down to working with a copy of the existing lens file. ZOS-API (Application Programming Interface) enables connections and customization of OpticStudio. The analysis will read a ZRD file, extract the data and plot the time-of-flight of rays reaching the detector. In this blog post we will show how a User Analysis can be created using the ZOS-API to measure the time-of-flight (TOF) of a LiDAR system. Outside of the automotive industry, LiDAR is used on mobile devices, for features like augmented reality, measuring distances, and blurring backgrounds in photos and videos. Such 3D mapping is becoming essential in the automotive industry as a key enabling technology of autonomous vehicles. LiDAR (Light Detection and Ranging) is a sensor technology that can help create 3D digital maps of the environment by measuring the time taken for emitted light to reflect from surrounding objects and return to a receiver.